Introduction: $2.5 Billion Being Spent. Most of It Poorly.

Your board approved the AI budget. Your CTO has a roadmap. Your team has run a pilot that everyone called “impressive.”

And yet — nothing has shipped.

Welcome to the defining paradox of enterprise technology in 2026.

Global generative AI spending is on track to hit $2.5 billion this year — a 4x increase over 2025, according to Gartner. Enterprise AI adoption has accelerated at a pace that no previous technology wave has matched. Worker access to AI tools rose by 50% in 2025 alone.

But here is the number that should stop every CIO mid-slide: 95% of generative AI pilots fail to move beyond the experimental phase, according to MIT’s GenAI Divide report. And according to PwC’s 2026 Global CEO Survey, 56% of CEOs report getting “nothing” from their AI adoption efforts.

The question in 2026 is no longer whether to implement enterprise AI. That question was settled years ago.

The question is how to be in the 5% that actually crosses the line from pilot to production — and builds a durable competitive advantage before the window closes.

This guide is the answer to that question.

If you are looking for a partner to build and deploy your enterprise AI system, explore our AI and machine learning development services.

1. The Industry Problem: Why Enterprise AI Is Stuck in Pilot Purgatory

The Numbers Tell Two Stories at Once

On the surface, enterprise AI has never looked healthier:

- 60% of workers now have access to AI tools (Deloitte 2026)

- 90% of enterprises have adopted general-use AI chatbots (Operator Collective, March 2026)

- 75% of workers report that AI improves the speed or quality of their work (OpenAI State of Enterprise AI)

- 66% of organizations report measurable gains in productivity and efficiency (Deloitte 2026)

- ChatGPT Enterprise weekly usage grew 8x in a single year, with average workers sending 30% more messages

Underneath that optimism, the execution reality is far harder:

- 95% of generative AI pilots fail to reach production (MIT GenAI Divide)

- 56% of CEOs say they are getting nothing from AI investments (PwC 2026 Global CEO Survey)

- Only 34% of companies are truly reimagining their business with AI — the other 66% are using it superficially or redesigning isolated processes (Deloitte 2026)

- 84% of companies have not redesigned jobs or workflows around AI capabilities at all

- Only 21% of organizations have a mature governance model for AI agents — despite 74% planning agentic deployments (Deloitte 2026)

- 32% of senior operators cite time — not budget or technology — as the biggest implementation barrier (Operator Collective, March 2026)

The pattern is consistent: the technology has outrun the organizational capacity to deploy it.

The Five Root Causes of Enterprise AI Failure in 2026

1. Treating the pilot as the destination The pilot success rate is actually high. Organizations can demonstrate AI works in controlled conditions. The failure happens at the transition point — scaling from 50 users to 5,000 requires infrastructure, governance, and change management that the pilot never built.

2. Shadow AI creating invisible risk Employees are not waiting for official deployments. With 90% of enterprises having standard chatbot access, workers use unauthorized AI tools to fill the gaps. Fragmented AI ownership across teams (reported by 27.7% of organizations) means no one has a clear picture of what AI is actually running in the business.

3. No measurement framework You cannot govern what you cannot see. The barriers to AI measurement are revealing: unclear responsibility (30.5%), fragmented ownership across teams (27.7%), and no correlation between usage and outcomes (24.4%). Enterprises spending millions on AI often cannot tell their CFO if it is working.

4. Agentic AI governance deficit 2026 is the year agentic AI moves from concept to deployment — but 79% of organizations deploying AI agents lack mature governance models. Autonomous systems making business decisions without proper guardrails represent the single fastest-growing category of enterprise AI risk.

5. Workforce readiness gap Deloitte’s 2026 report is unambiguous: insufficient worker skills are the number one barrier to AI integration. Only 53% of organizations are actively educating their workforce to raise AI fluency. The rest are deploying tools into a workforce that is not equipped to use them effectively.

2. The Solution: Move From Ambition to Activation

The enterprises succeeding with AI in 2026 share a common architecture — not of technology, but of organizational decision-making.

They govern before they scale. Organizations with formal AI governance frameworks reach production deployment twice as fast as those without. In 2026, with agentic AI entering the picture, governance is no longer optional — it is the infrastructure that allows everything else to move.

They follow the 10-20-70 rule. BCG research confirms the resource allocation that separates winning organizations: 10% of AI investment goes to algorithms, 20% to technology and data, and 70% to people and processes. Organizations that invert this ratio spend heavily on tools and wonder why adoption is flat.

They measure everything from Day 1. The enterprises reporting transformative impact in 2026 are not necessarily the ones with the most sophisticated AI models. They are the ones that connected usage data to business outcomes from the first day of deployment — and used that data to accelerate or redirect investment.

They treat agentic AI as the next horizon, not an afterthought. Agentic AI — autonomous systems that reason, plan, and execute multi-step tasks — is the defining enterprise AI development of 2026. Gartner projects that 40% of enterprise applications will have embedded AI agents by year-end. Organizations that are not planning for agentic deployment now will be reacting to it in 18 months.

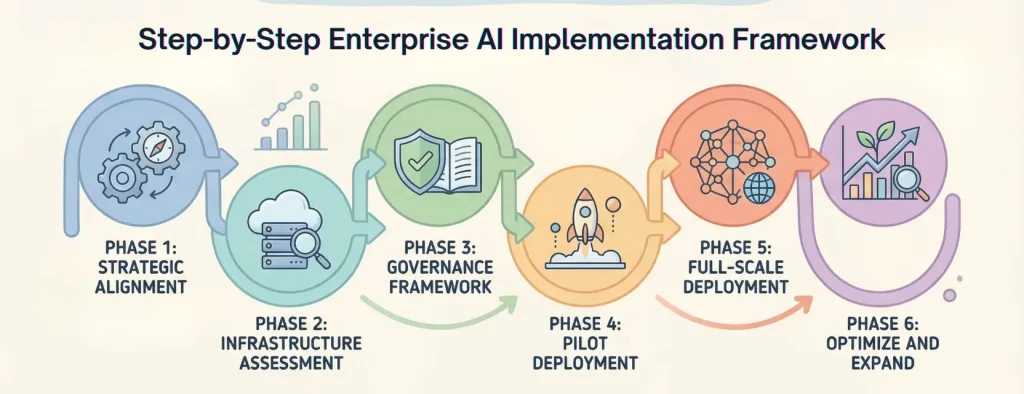

3. Step-by-Step Enterprise AI Implementation Framework

This six-phase roadmap is built on how organizations are actually deploying AI in 2026 — not how they planned to three years ago. Each phase has a clear objective, a key deliverable, and a failure mode to avoid.

Phase 1: Strategic Alignment (Weeks 1–3)

Objective: Define the business case before touching a single tool.

The single most common enterprise AI failure mode is buying the solution before defining the problem. Phase 1 exists to eliminate that.

What to do:

- Audit current workflows for AI opportunity density — time spent, error rate, decision frequency, and volume

- Build an impact vs. complexity matrix for 10–15 potential use cases, then prioritize the top 2–3

- Construct a business case with projected ROI, time-to-value, and specific success metrics (not “improve productivity” — but “reduce invoice processing time from 4 days to 4 hours”)

- Secure named executive sponsorship — AI initiatives without C-suite cover stall in procurement and budget reviews

Key deliverable: A prioritized AI opportunity map with quantified ROI projections, a signed executive sponsor, and a go/no-go criteria document for the pilot phase

Critical question that must be answered before proceeding: “If this AI system works exactly as designed, which specific business metric moves by exactly how much — and by when?”

Phase 2: Infrastructure Assessment (Weeks 3–6)

Objective: Determine what you have, what you need, and what will break at scale.

What to do:

- Conduct a data readiness audit covering quality, completeness, accessibility, and lineage

- Assess current cloud and compute infrastructure against projected AI workloads

- Map integration requirements for existing enterprise systems (ERP, CRM, HRIS, ticketing)

- Evaluate the build vs. buy decision for each prioritized use case (more on this in the comparison section below)

- Establish data governance and regulatory compliance requirements — GDPR, HIPAA, SOC 2, and crucially, the EU AI Act for European operations

The number most organizations underestimate: Data preparation consistently surprises organizations with its cost. Budget $100,000–$380,000 for data readiness work in a medium-to-large deployment. Organizations that skip a formal infrastructure audit routinely overspend the rest of their implementation by 40–60%.

2026 addition — sovereign AI assessment: Deloitte’s 2026 report found that 77% of companies now consider the country of origin of AI solutions a key factor in vendor selection. Regulatory pressure around data sovereignty is real and growing. Build your infrastructure assessment to include where data is processed, stored, and governed — not just how.

Phase 3: Governance Framework (Weeks 4–8, running parallel to Phase 2)

Objective: Build the guardrails before autonomous systems go live.

This phase is more critical in 2026 than it has ever been. With agentic AI entering production environments, the governance decisions you make now determine whether your autonomous AI systems are assets or liabilities.

What to do:

- Define an AI ethics policy and acceptable use guidelines — specific to your industry and risk profile

- Establish model performance thresholds and failure protocols for each use case

- Create a human-in-the-loop oversight framework — particularly critical for any agentic deployment, clinical decisions, financial risk, and legal applications

- Assign AI accountability roles: AI Operations Manager, Data Steward, Compliance Lead, and an AI Ethics Officer (now considered best practice in regulated industries)

- Build a risk register with specific entries for your use cases, including hallucination risk, bias risk, data leakage risk, and regulatory exposure

- Adopt or adapt ISO/IEC 42001 — the AI management system standard published in December 2023, now gaining enterprise traction as the governance reference framework

Why this matters more than ever: Only 21% of organizations have mature governance for AI agents. With 74% planning agentic deployments in the next two years, the governance deficit is growing faster than the deployment rate. Build the governance infrastructure now, while your agent systems are still small enough to control.

Phase 4: Pilot Deployment (Weeks 8–16)

Objective: Prove value in a controlled, measurable environment with a clear path to production.

The critical distinction in 2026: design your pilot as a production rehearsal — not a proof of concept. The organizations stuck in pilot purgatory designed experiments. The organizations in production designed deployments.

What to do:

- Select a pilot cohort of 50–200 users with representation across skill levels — not just early adopters

- Deploy the AI system alongside existing workflows with explicit comparison metrics

- Instrument everything from Day 1 — usage rates, time savings, error rates, user satisfaction, and business outcome impact

- Run a 60–90 day controlled experiment with published go/no-go criteria that all stakeholders agreed to before the pilot started

- Capture qualitative feedback weekly through structured interviews — not just usage dashboards

Metrics to track from Day 1:

- Task completion time (baseline vs. AI-assisted)

- Error rate or rework frequency

- User adoption rate (% of eligible users actively engaging — not just accounts created)

- Employee Net Promoter Score for the tool

- Direct cost or revenue impact where quantifiable

- Escalation or override rate (particularly important for decision support and agentic tools)

Pilot success threshold before scaling: 70%+ active adoption rate, measurable efficiency improvement of 15%+, no unresolved critical safety or compliance issues, and a documented production deployment plan with infrastructure requirements.

The pilot failure pattern to avoid: Running a 90-day pilot, declaring success based on a 20-person power user group, and attempting to scale to 2,000 users with the same architecture. This is how most organizations enter pilot purgatory — not with a failed pilot, but with a misleadingly successful one.

Phase 5: Full-Scale Deployment (Weeks 16–28)

Objective: Move from pilot to production with organizational change management running in parallel.

What to do:

- Architect for enterprise scale from the start — multi-region, multi-tenant, with MLOps monitoring and drift detection built in

- Launch structured onboarding with role-specific training — not a single all-hands demo

- Assign AI Champions in each department: peer advocates who support adoption and surface friction points in real time

- Communicate the “why,” the “what changes,” and specifically “what is in it for me” to every affected team before deployment, not after

- Build explicit processes for AI errors and escalations — employees need to know what to do when the AI is wrong, or they will stop using it

The deployment insight that most implementation guides miss: Deloitte’s 2026 research confirms that the AI skills gap is the number one barrier to AI integration. The technology is ready. The workforce often is not. Organizations that allocate 70% of their implementation resources to people and processes (the BCG 10-20-70 rule) consistently outperform those that treat deployment as a purely technical exercise.

Agentic AI deployment note (2026 specific): If this deployment includes any agentic AI — systems that autonomously execute multi-step tasks — apply additional governance at this phase. Implement graduated autonomy: start with human approval required, then move to human notification, then to fully autonomous operation only after performance data justifies it. The enterprises that have deployed agentic AI successfully in 2026 follow this pattern without exception.

Phase 6: Optimize and Expand (Month 7 Onward)

Objective: Continuously improve, measure, and build the AI flywheel.

What to do:

- Hold monthly AI performance reviews with cross-functional stakeholders and hard metrics

- Monitor for model drift quarterly — retrain on fresh data before performance degrades

- Maintain a Shadow AI audit: employees are using tools you have not sanctioned, and you need visibility into that

- Document an internal AI playbook capturing what worked, what did not, and how to deploy the next use case faster

- Report AI ROI to executive sponsors quarterly with specific dollar figures

- Identify adjacent use cases that can leverage the existing infrastructure at 60–70% lower deployment cost

The compounding advantage: Organizations that maintain a rigorous optimization cadence see ROI accelerate between months 18 and 36. The infrastructure, governance, and change management you built for the first use case makes every subsequent deployment faster and cheaper. This is the flywheel that early movers are building right now — and it compounds.

How to Audit Shadow AI: • Review SaaS expense management tools (Zylo, Productiv) for unauthorized AI subscriptions • IT department firewall logs for AI domain access • Employee surveys asking “What AI tools do you use daily?” • Browser extension audits on company devices

Remediation costs: $50K-$200K for mid-market enterprises discovering widespread unauthorized tool usage

4. Enterprise AI Implementation Costs: The Honest 2026 Breakdown

The cost landscape shifted meaningfully in 2026. Agentic AI has introduced a new tier of complexity and investment. Here are real numbers.

Initial Investment by Organization Size

| Organization Size | First-Year Cost Range | Key 2026 Cost Drivers |

|---|---|---|

| Mid-Market (200–1,500 employees) | $250K – $900K | Agentic workflow build, integration depth, data readiness |

| Large Enterprise (1,500–50K) | $900K – $5M | Multi-agent systems, MLOps, sovereign AI compliance |

| Global Enterprise (50K+) | $5M – $20M+ | Cross-region deployment, custom fine-tuning, governance infrastructure |

Cost by AI System Type (2026 Benchmarks)

| AI System | Development Cost Range | Monthly Ops Cost |

|---|---|---|

| AI Chatbot / Conversational Agent | $40K – $250K | $500 – $3,000 |

| Predictive Analytics System | $60K – $500K | $2,000 – $8,000 |

| Task Automation Agent (simple) | $20K – $35K | $1,000 – $5,000 |

| Decision Support Agent (mid-complexity) | $40K – $70K | $3,200 – $8,000 |

| Autonomous Agentic AI System | $80K – $200K+ | $3,200 – $13,000 |

| Multi-Agent Enterprise System | $350K – $900K+ | $10,000 – $50,000+ |

| Generative AI Platform (custom) | $150K – $1.2M | $5,000 – $25,000 |

Full Implementation Cost Breakdown

Technology and Infrastructure (35–45% of total budget)

- Cloud compute and storage: $50K–$500K/year

- AI platform licensing: $50K–$2M/year depending on vendor and scale

- LLM API costs: $20K–$200K/year (scale-dependent)

- Vector database and RAG infrastructure: $15K–$80K/year

Data Readiness (20–30% of total budget)

- Data audit and cleansing: $50K–$380K

- Data pipeline and integration engineering: $75K–$300K

- Ongoing data governance tooling: $30K–$100K/year

- Sovereign AI data localization (new in 2026): $25K–$150K depending on jurisdictions

Talent and Training (20–25% of total budget)

- AI/ML engineers: $150K–$350K/year per FTE (demand is still outpacing supply)

- AgentOps specialists (new 2026 role): $130K–$280K/year per FTE

- Change management and structured training programs: $50K–$200K

- External AI implementation consulting: $100K–$1M depending on scope

Governance and Compliance (5–15% of total budget — growing in 2026)

- Legal and regulatory review (including EU AI Act compliance): $25K–$200K

- AI risk and safety tooling: $30K–$150K/year

- GDPR, HIPAA, SOC 2 compliance for AI-specific systems: $5K–$25K per certification

- ISO/IEC 42001 AI management system certification: $20K–$75K

Hidden Costs That Derail 2026 Budgets

These four costs are reported as surprises by the majority of organizations post-deployment:

- AgentOps infrastructure — Ongoing observability, prompt versioning, and feedback loops for production AI agents cost $3,200–$13,000/month. Most teams do not budget for this until the invoice arrives.

- Fine-tuning iteration cycles — Each failed fine-tuning run adds compute cost and time. Budget $10K–$50K for fine-tuning OpenAI or Anthropic models; $50K–$200K for custom model training.

- Shadow AI remediation — When the formal AI audit reveals unauthorized tools running across the organization, there are legal, security, and data governance costs to remediate.

- Pilot-to-production infrastructure delta — The gap between pilot architecture and production-grade architecture consistently costs 2–3x the pilot build cost.

ROI Timeline: 2026 Benchmarks

| Use Case Category | Time to Break-Even | Typical Annual Return |

|---|---|---|

| Customer service chatbot / AI agent | 2–6 months | $500K–$2M cost deflection |

| Developer AI tools (coding assistants) | 3–6 months | 15–30% productivity gain |

| Document processing and automation | 6–12 months | 40–70% cost reduction |

| Sales intelligence and lead scoring | 3–8 months | 45% productivity increase |

| Supply chain / operations AI | 12–24 months | $1M–$15M+ at enterprise scale |

| Clinical or financial risk AI | 18–36 months | High ROI, long compliance runway |

The enterprise ROI benchmark: Organizations report average ROI of 200–500% within 6 months for well-implemented AI agents in customer service and sales automation. For complex enterprise deployments, IDC places break-even at 12–36 months. The critical variable remains data quality — organizations with clean, well-governed data consistently achieve ROI 40–60% faster.

5. Tools and Technologies: The 2026 Enterprise AI Stack

The stack has evolved significantly. Agentic AI, sovereign AI compliance, and AgentOps are the three layers that did not exist in most enterprise stacks two years ago.

Foundation Models and AI Platforms

| Platform | Best For | Key 2026 Differentiator |

|---|---|---|

| Microsoft Azure OpenAI | GPT-4o and o-series for enterprise compliance | Copilot Studio for no-code agent building |

| Google Vertex AI | End-to-end MLOps, Gemini integration | Multimodal AI on GCP |

| AWS Bedrock | Multi-model access in AWS ecosystem | Cross-model benchmarking and RAG integration |

| Anthropic Claude (Enterprise) | Legal, financial, high-accuracy reasoning | Extended context, strong instruction-following |

| IBM watsonx | Governance-first AI for regulated industries | Built-in AI ethics and bias detection |

Agentic AI and Workflow Automation (2026 Priority Layer)

- Microsoft Copilot Studio — Low-code agent builder with deep Microsoft 365 and Azure integration

- Salesforce Agentforce — CRM-native autonomous agents for sales, service, and commerce

- ServiceNow AI — IT and enterprise workflow automation with agentic capabilities

- LangChain / LangGraph — Framework for building multi-step, multi-tool AI agent pipelines

- CrewAI — Multi-agent orchestration for complex enterprise workflows

- AutoGen (Microsoft) — Conversational agent frameworks for multi-agent task execution

MLOps and AgentOps

- Databricks MLflow — Experiment tracking, model registry, and deployment pipelines

- Weights and Biases — Model performance tracking, now with agent evaluation support

- Arize AI / Fiddler AI — Production model monitoring, drift detection, explainability

- LangSmith — Purpose-built observability and evaluation for LLM and agent applications

Data Infrastructure

- Snowflake — Unified data platform with Cortex AI for in-platform AI workloads

- Databricks Lakehouse — Combined data engineering and ML platform with Unity Catalog governance

- dbt + Fivetran — Data transformation and pipeline automation

AI Governance and Compliance

- Credo AI — AI governance platform for policy management and compliance tracking

- Arthur AI — AI fairness, performance monitoring, and risk management

- Holistic AI — Regulatory compliance and EU AI Act readiness

- Truera — Model intelligence and production AI quality management

Build vs. Buy vs. Hybrid in 2026

| Dimension | Build | Buy | Hybrid (Recommended for Most) |

|---|---|---|---|

| Time to deploy | 12–24 months | 1–3 months | 3–9 months |

| First-year cost | $500K–$5M+ | $50K–$500K | $200K–$2M |

| Customization depth | Highest | Limited | High via RAG and fine-tuning |

| Sovereign AI compliance | Full control | Vendor-dependent | Configurable |

| Agentic capability | Custom-built | Platform-limited | Best of both |

| Best for | Proprietary data moats | Commodity workflows | 80% of enterprise use cases |

2026 verdict: Most enterprises now build on top of commercial foundation models using RAG (retrieval-augmented generation) and fine-tuning on proprietary data. This hybrid approach delivers 90%+ of the customization value of a fully custom model at 10–20% of the cost, and it deploys in months rather than years.

6. Case Studies: What Enterprise AI Looks Like in 2026

Case Study 1: Manufacturing — Agentic AI in Procurement (Danfoss)

Problem: Global manufacturer processing thousands of purchase orders manually. Average response time: 42 hours. Human error rate creating downstream supply chain disruption.

Solution: Autonomous agentic AI system built to handle transactional purchase order decisions without human intervention for standard cases.

Implementation: 6-month deployment with phased autonomy — started with human approval required, graduated to fully autonomous execution for standard orders.

Results:

- 80% of transactional purchase order decisions now fully automated

- Response time reduced from 42 hours to near real-time

- $15 million in annual savings

- 95% accuracy rate maintained consistently

- Payback period: 6 months

Key lesson: Graduated autonomy — starting with human-in-the-loop and earning the right to full automation through demonstrated performance — is the governance model that makes agentic AI deployable in mission-critical environments.

Case Study 2: Financial Services — Insurance Claims Processing at Scale

Problem: High-volume insurance claim processing requiring manual review, causing delays and high labor cost across a 10,000-claims-per-month operation.

Solution: AI agent system handling initial claim assessment, data extraction, and routing — with human review retained for complex or high-value claims.

Results:

- $370,000 in monthly cost savings — $4.4 million annually

- Payback period: 2.3 months

- Processing speed improvement: 65% reduction in cycle time

- Human reviewers redirected to complex claims requiring judgment

Key lesson: In regulated industries, the agent does not need to replace human judgment entirely to deliver transformative ROI. Automating the routine 80% of cases creates massive value while keeping humans in the loop for the 20% that require it.

Case Study 3: Technology — Developer Productivity Compounding

Problem: Engineering velocity declining as codebase complexity grew. Senior engineers spending increasing time on routine code generation, documentation, and test writing.

Solution: Enterprise AI coding assistant deployed across 3,000 developers. Then expanded to AI agents for automated code review, test generation, and documentation.

Results (Year 1):

- 15%+ improvement in developer velocity

- Senior engineers redirected 30% of previously routine work to higher-complexity problems

- Positive ROI achieved in 6 months

Results (Year 2 — compounding):

- Second AI use case (automated code review) deployed at 65% lower cost using existing infrastructure

- Developer velocity improvement expanded to 28% as agents handled more of the routine workflow

- Three additional use cases in production using the same MLOps and governance infrastructure

Key lesson: The compounding effect is real and measurable. Each AI deployment makes the next one 40–70% cheaper. The enterprises building AI infrastructure in 2026 are buying down the cost of every future deployment.

Case Study 4: Healthcare — Ambient AI Documentation at Scale

Problem: Physicians averaging 2–3 hours per day on documentation, driving burnout and reducing patient-facing time across a 10,000-employee health system.

Solution: Ambient AI documentation system transcribing and structuring clinical notes in real time, integrated into the existing EHR workflow.

Implementation timeline: 6 months, with 8 weeks of structured physician change management before the pilot.

Results:

- 53% reduction in documentation time per patient encounter

- Physician satisfaction scores increased by 34 points

- Break-even: 14 months post-deployment

- Transcription service costs reduced by $2.1 million annually

Key lesson: In clinical environments, trust is the deployment variable — not technology. Organizations that skipped change management in healthcare AI deployments averaged 40% lower adoption. The 8 weeks of pre-pilot engagement was not overhead. It was the implementation.

7. Comparison: Enterprise AI Approaches in 2026

Generative AI vs. Agentic AI: What Enterprises Are Actually Deploying

| Dimension | Generative AI (2023–2025 wave) | Agentic AI (2026 wave) |

|---|---|---|

| What it does | Generates content, answers questions, summarizes | Plans, decides, and executes multi-step tasks autonomously |

| Human involvement | High — human prompts and reviews everything | Low — agent operates within defined guardrails |

| Deployment maturity | Widespread, well-understood | Rapidly scaling — 79% of orgs have deployed in some form |

| Governance complexity | Moderate | High — requires graduated autonomy and AgentOps |

| ROI potential | Strong for productivity | Higher — transforms workflows, not just assists |

| Top enterprise use cases | Writing, summarization, code completion, Q&A | Procurement, claims processing, customer service, IT ops |

The Three Levels of AI Transformation in 2026 (Deloitte)

Deloitte’s 2026 research classifies enterprise AI deployment across three distinct levels. Knowing which level your organization is at — and where you need to be — shapes every decision in this guide.

Level 1 — Superficial AI (37% of organizations): Using AI tools with little or no change to underlying processes. Employees have access but workflows are unchanged. This group reports the lowest ROI and is most at risk of falling behind.

Level 2 — Process Redesign (30% of organizations): Redesigning key workflows and processes while keeping the core business model intact. Measurable productivity and efficiency gains. This is where most enterprises in active implementation currently sit.

Level 3 — Business Transformation (34% of organizations): Creating new products, revenue streams, and business models enabled by AI. Twice as many leaders at this level report transformative impact compared to last year. This is the destination — and the 5% production success rate suggests the path there requires exactly the framework this guide describes.

What is enterprise AI implementation?

Enterprise AI implementation is the structured deployment of AI systems across an organization. Key components:

- 6-phase process: Alignment, infrastructure, governance, pilot, deployment, optimization

- Timeline: 16-28 weeks for standard deployments

- Cost: $250K-$5M first year (varies by organization size)

- Critical success factor: 70% investment in people/processes, not just technology

- ROI: 2-36 months depending on complexity

95% of AI pilots fail to reach production. Success requires governance frameworks, data readiness, and change management—not just advanced models.

FAQ:

1. How long does enterprise AI implementation take in 2026?

A standard enterprise AI deployment takes 16–28 weeks from strategic alignment to first production deployment with dedicated resources and executive support. Simple agentic use cases — invoice processing, IT ticket routing, customer FAQs — can reach production in 6–12 weeks. Complex multi-agent systems spanning departments typically require 6–12 months. The biggest timeline risk remains data infrastructure readiness, which adds 3–6 months when organizations discover data quality problems mid-implementation. The second-fastest category in 2026 is developer AI tools, which consistently deliver positive ROI in 3–6 months and serve as the ideal first deployment for organizations new to enterprise AI.

2. How much does enterprise AI implementation cost in 2026?

Mid-market enterprises (200–1,500 employees) should budget $250,000 to $900,000 in year one, including agentic workflow builds, integrations, data readiness, and training. Large enterprises typically invest $900,000 to $5 million. The hidden costs that consistently blow budgets are: data preparation ($100K–$380K, reported as a surprise by 99% of organizations), AgentOps infrastructure ($3,200–$13,000/month for production agent systems), and pilot-to-production infrastructure gaps that cost 2–3x the original pilot build. Plan for ongoing operational costs at 20–30% of your initial implementation budget annually, increasing to 35–40% for agentic AI deployments due to higher monitoring requirements.

3. What is the ROI of enterprise AI in 2026?

The range has widened significantly. Well-implemented AI agents in customer service and sales automation now deliver 200–500% ROI within 6 months, according to McKinsey’s 2026 AI research. The Danfoss procurement case demonstrates $15 million annual savings with a 6-month payback. For complex, regulated-industry deployments, break-even typically falls between 12 and 36 months. The critical variable is always data quality — organizations with well-governed data achieve ROI 40–60% faster. The measurement paradox of 2026: nearly three-quarters of organizations say their most advanced AI initiatives met or exceeded ROI expectations, yet 56% of CEOs report getting nothing from AI. The gap between these numbers lives in measurement quality, not deployment quality.

4. What is agentic AI and why does it matter for enterprise implementation in 2026?

Agentic AI refers to autonomous AI systems that can plan, decide, and execute multi-step business tasks with minimal human intervention. Unlike generative AI, which generates content in response to prompts, agentic AI acts: it opens tickets, processes invoices, rebooks flights, routes leads, and executes purchasing decisions — within defined guardrails. Gartner projects that 40% of enterprise applications will embed AI agents by end of 2026. For enterprise implementation, agentic AI requires additional governance investment: graduated autonomy frameworks, AgentOps monitoring ($3,200–$13,000/month), and human-in-the-loop oversight for high-stakes decisions. Organizations that govern agentic AI correctly are already seeing the highest ROI in the enterprise AI landscape.

5. What are the biggest risks in enterprise AI implementation in 2026?

The top five risks, updated for 2026: (1) Pilot purgatory — 95% of AI pilots fail to reach production due to inadequate infrastructure planning during the experimental phase

(2) Shadow AI — employees using unauthorized AI tools create legal, security, and data governance exposure that organizations often discover months after the fact

(3) Agentic AI governance deficit — only 21% of organizations deploying AI agents have mature governance models, creating autonomous systems making business decisions without adequate oversight

(4) Workforce readiness failure — insufficient worker AI fluency remains the number one barrier to integration according to Deloitte’s 2026 survey

(5) Measurement collapse — organizations that cannot connect AI usage to business outcomes cannot justify continued investment, defend against CFO scrutiny, or make intelligent decisions about where to scale.

Conclusion:

The Window Is 2026–2027. After That, You Are Catching Up.

Enterprise AI is no longer the technology investment of the future. It is the operational reality of the present.

The organizations building AI-powered competitive advantages in 2026 are not the ones with the largest budgets or the most sophisticated models. They are the ones that governed before they scaled. They invested 70% of their AI resources in people and processes. They designed pilots as production rehearsals instead of experiments. They tracked ROI from Day 1. And when agentic AI arrived, they deployed it with graduated autonomy instead of hoping it would govern itself.

The 95% that fail share the inverse of that pattern. They deploy tools into unprepared workforces. They declare pilot success and discover production requires a completely different infrastructure. They measure output volume and call it impact. And they plan for agentic AI without building the governance that makes autonomous systems safe to deploy.

The technology gap between enterprises is closing. The organizational readiness gap is widening.

You now have the complete 2026 playbook: the six phases, the updated cost data, the agentic AI framework, the four case studies with real numbers, and the governance architecture that separates the 5% in production from the 95% still running experiments.

The question is not whether your enterprise needs AI. That was decided for you by your competitors, your customers, and your board.

The question is whether you implement it with the discipline that earns your place in the 5% — or spend another year in pilot purgatory explaining to your CFO why the impressive demo never shipped.

→ Start here: Complete Phase 1 this week. Map your 10–15 highest-volume, most repetitive workflows. Build the impact vs. complexity matrix. Identify your executive sponsor. Everything else follows from that clarity — and the compounding advantage of early movers starts the day you begin.

Not sure what AI will cost your organization? get an assistance call from our expert team, contact us today.

External Authoritative Sources (2026)

- Deloitte State of AI in the Enterprise 2026 — https://www.deloitte.com/us/en/what-we-do/capabilities/applied-artificial-intelligence/content/state-of-ai-in-the-enterprise.html

- PwC 2026 Global CEO Survey — https://www.pwc.com/gx/en/ceo-survey

- MIT GenAI Divide Report — Referenced via Larridin State of Enterprise AI 2026 — https://larridin.com/solutions/ai-adoption-the-complete-enterprise-guide-2026